Selected Work

Multi-Task Learning for Bone X-Rays

AI Algorithms from Scratch

Liquid

TEDxIA Talk

GJSS Presentation (UGA)

Waypoint

Plantopia

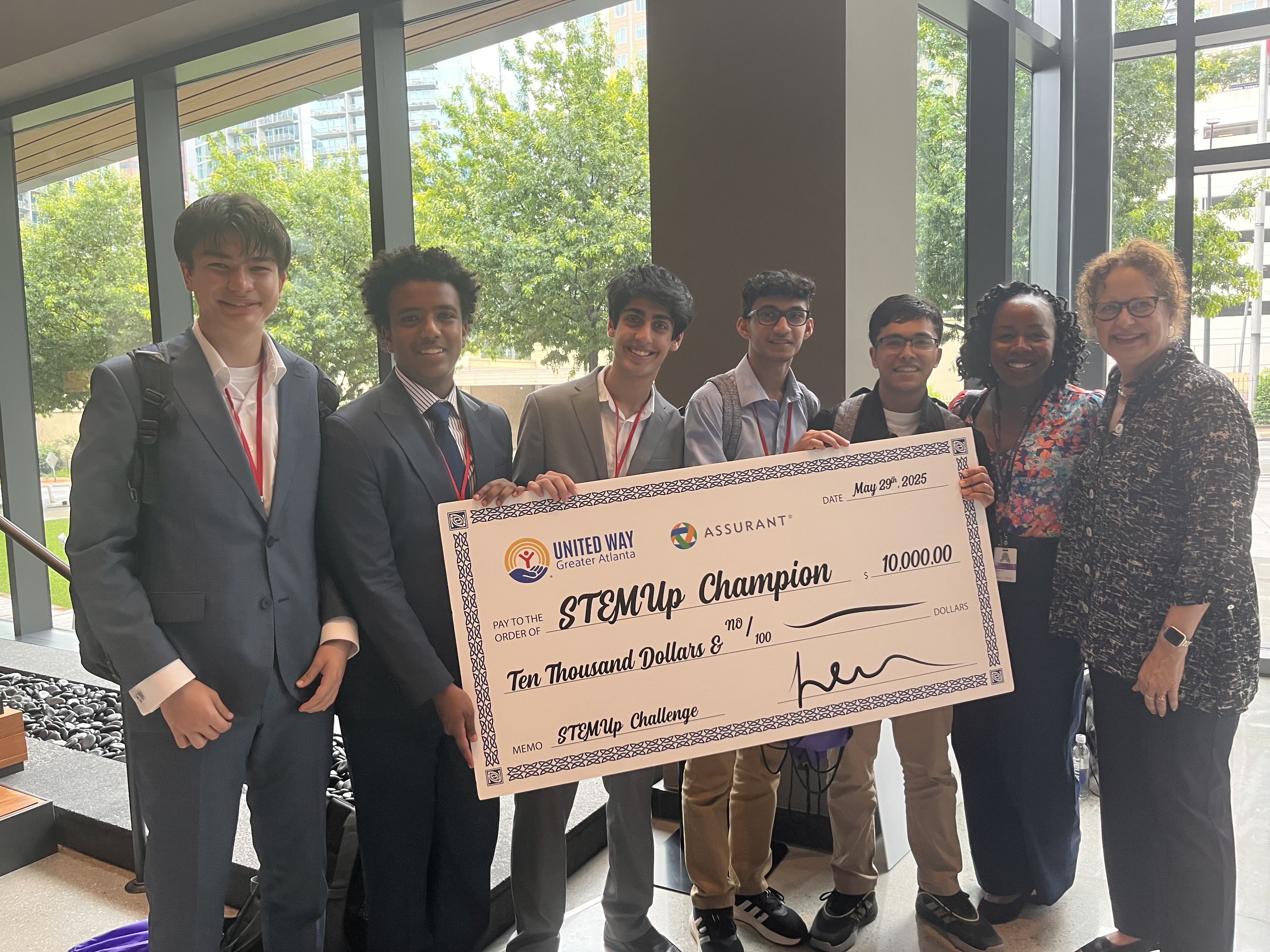

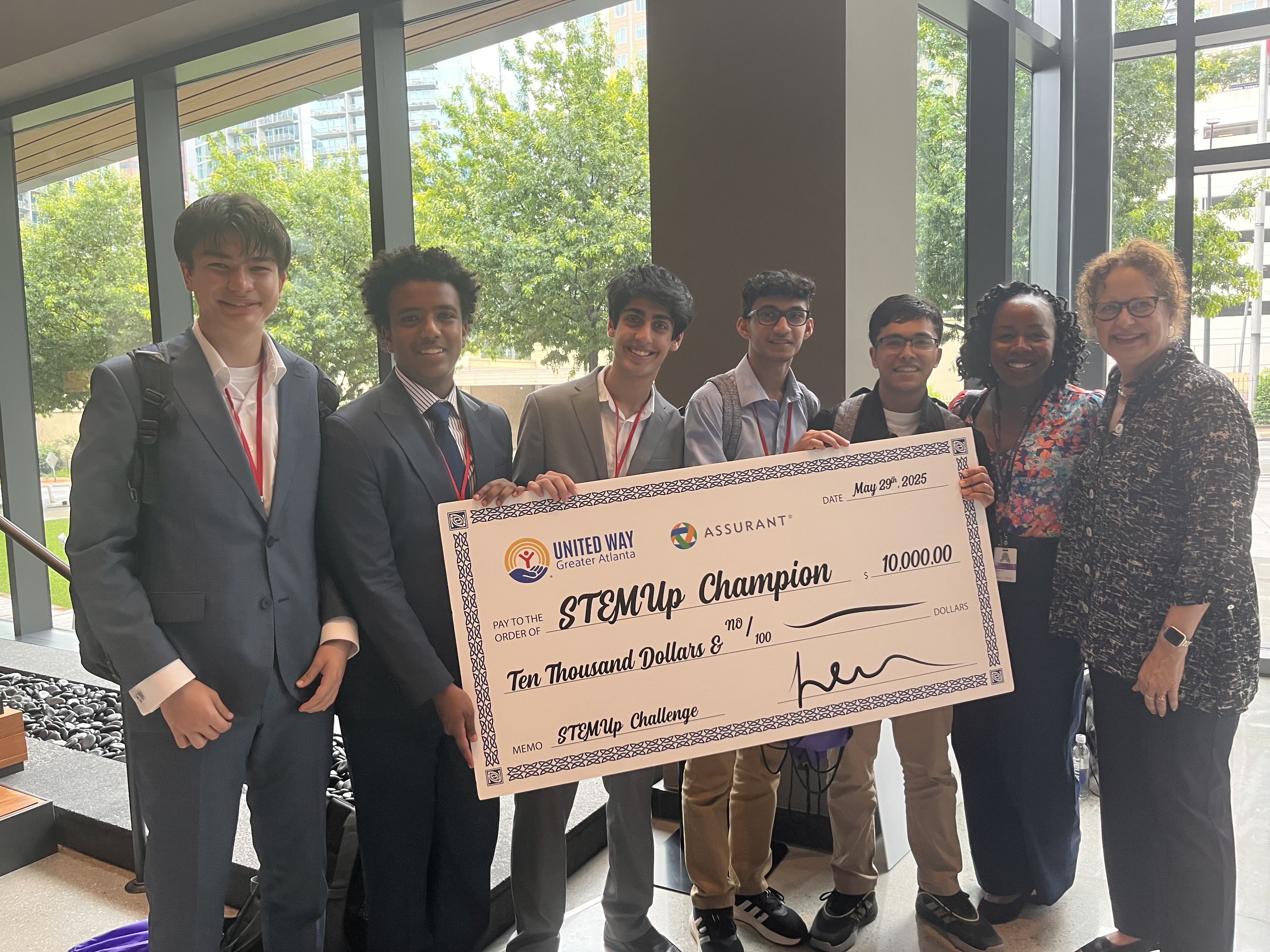

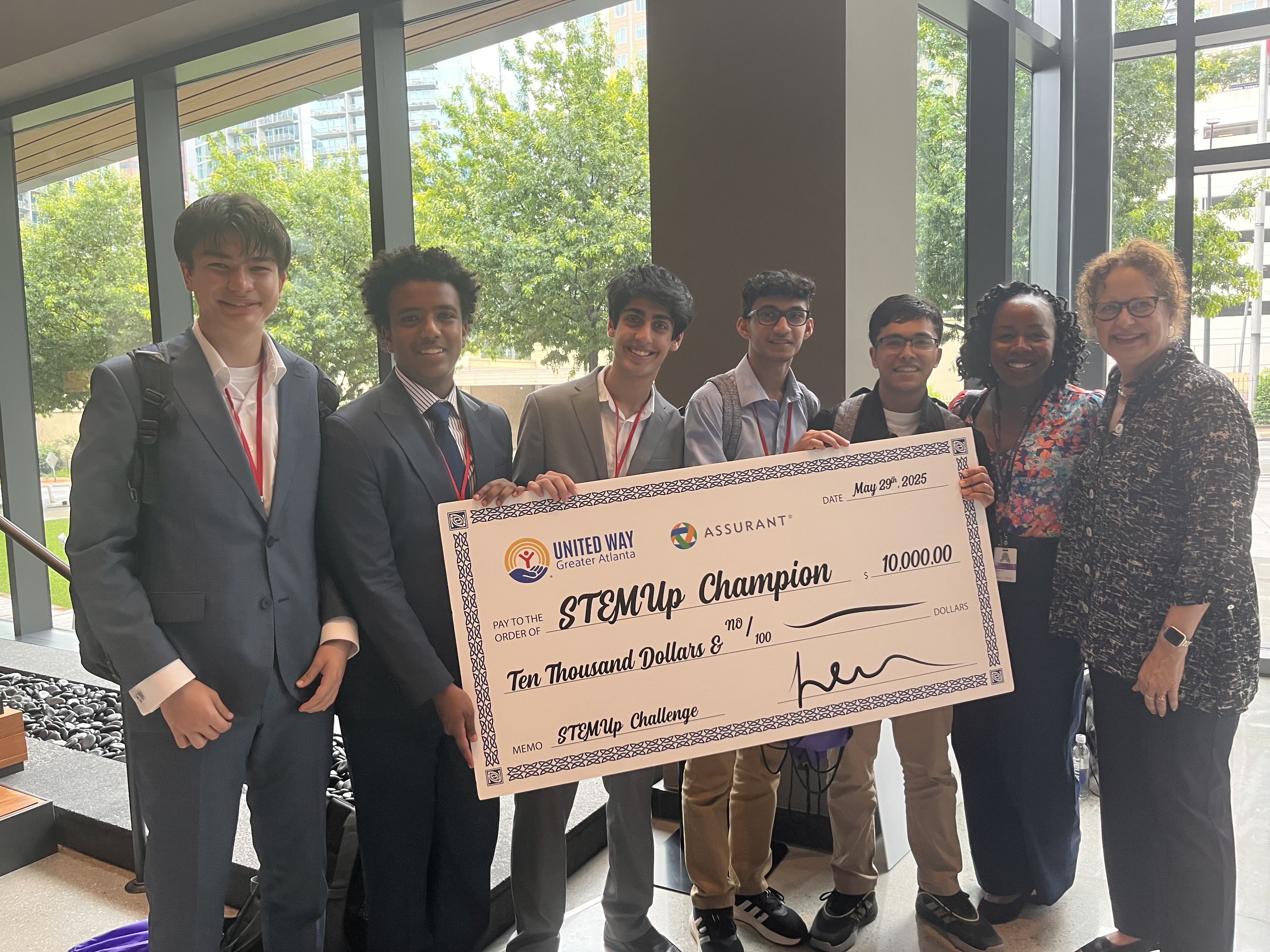

A $10,000 investor-funded venture bringing AI disease diagnosis to the edge of the farm.

Secured through successful pitches to corporate investors including NCR and Assurant.

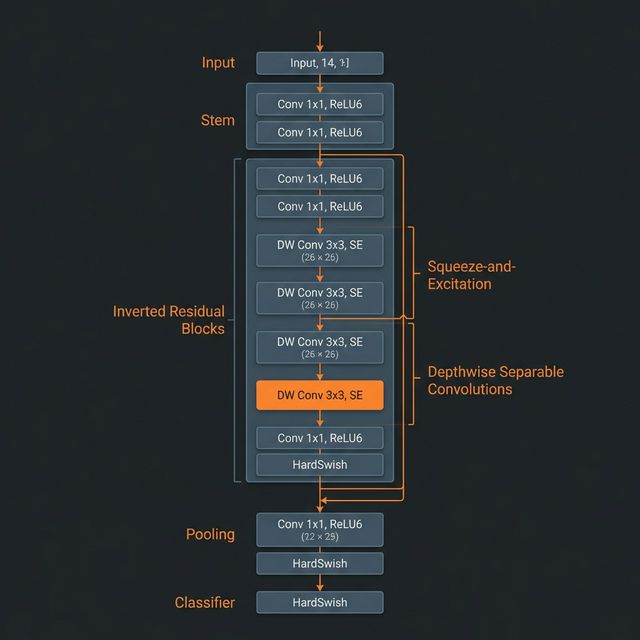

MobileNetV3 inference provides instant real-time feedback in signal-dead zones.

The Origin

Plantopia was created to help gardeners take care of their plants. The initial idea of the project came when I saw my parents struggle with our garden. They were new gardeners and had trouble understanding a plant’s needs and how to care for them.

To solve this, I first talked with them and separated major pain points such as difficulty identifying plant struggles and diseases. After consultation, I was able to sketch out a solution for their problems, in the form of a web and mobile app paired with a physical probe.

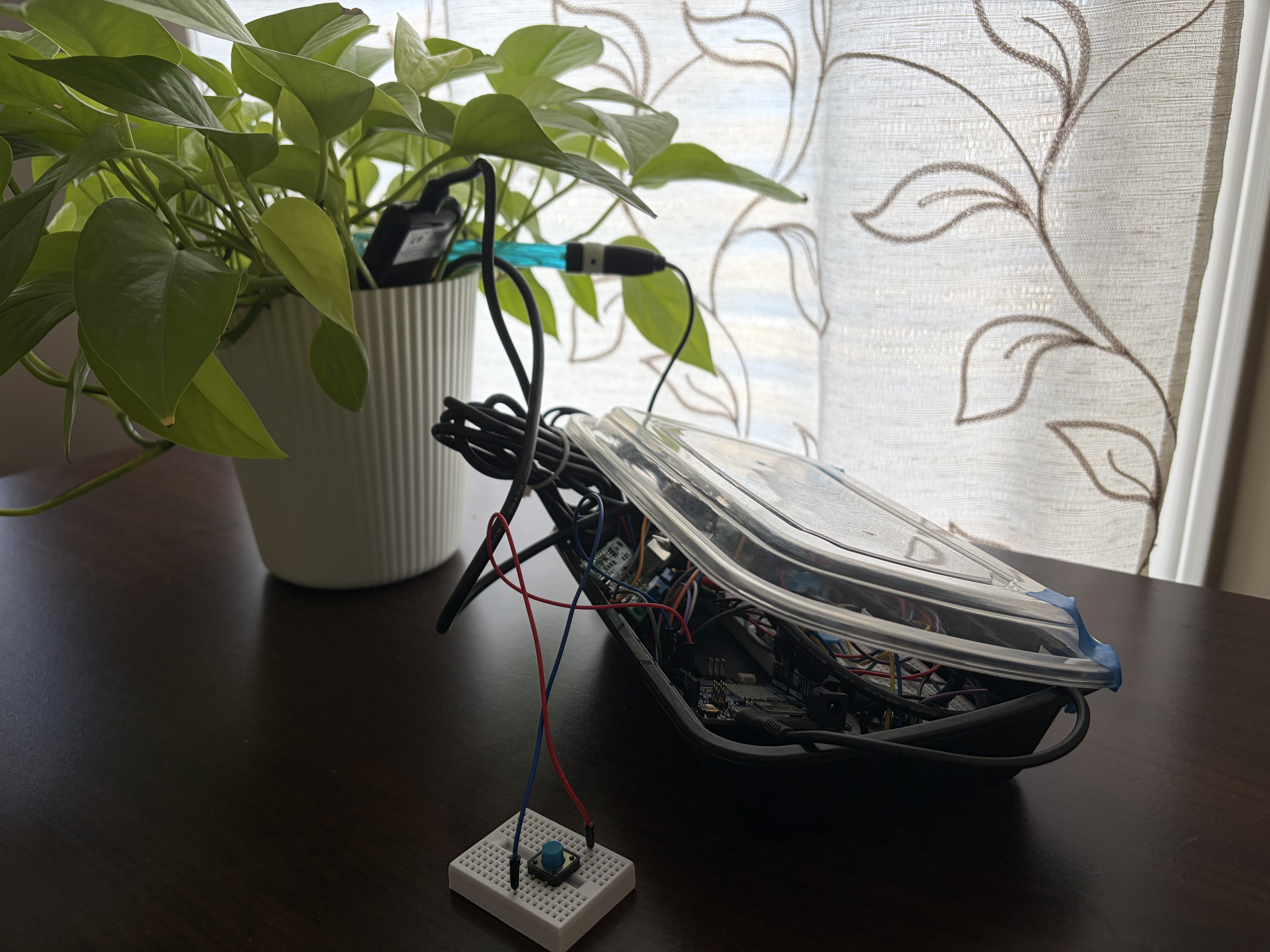

The Solution: Hardware Meets Software

A user inserts a lightweight probe into a plant. The probe records soil data such as temperature, moisture, NPK levels, and humidity, then sends it to the user’s online Plantopia account.

Figure 1: Early-stage Arduino prototype featuring moisture and NPK sensors.

Once the user logs onto their Plantopia account on their phone or computer, they can view soil data for all of their plants, set reminders and view alerts, and run AI analysis on their plant data and using plant images to receive smart recommendations.

Interactive Demo: Real-time sensor data visualization and AI-driven plant health diagnostics.

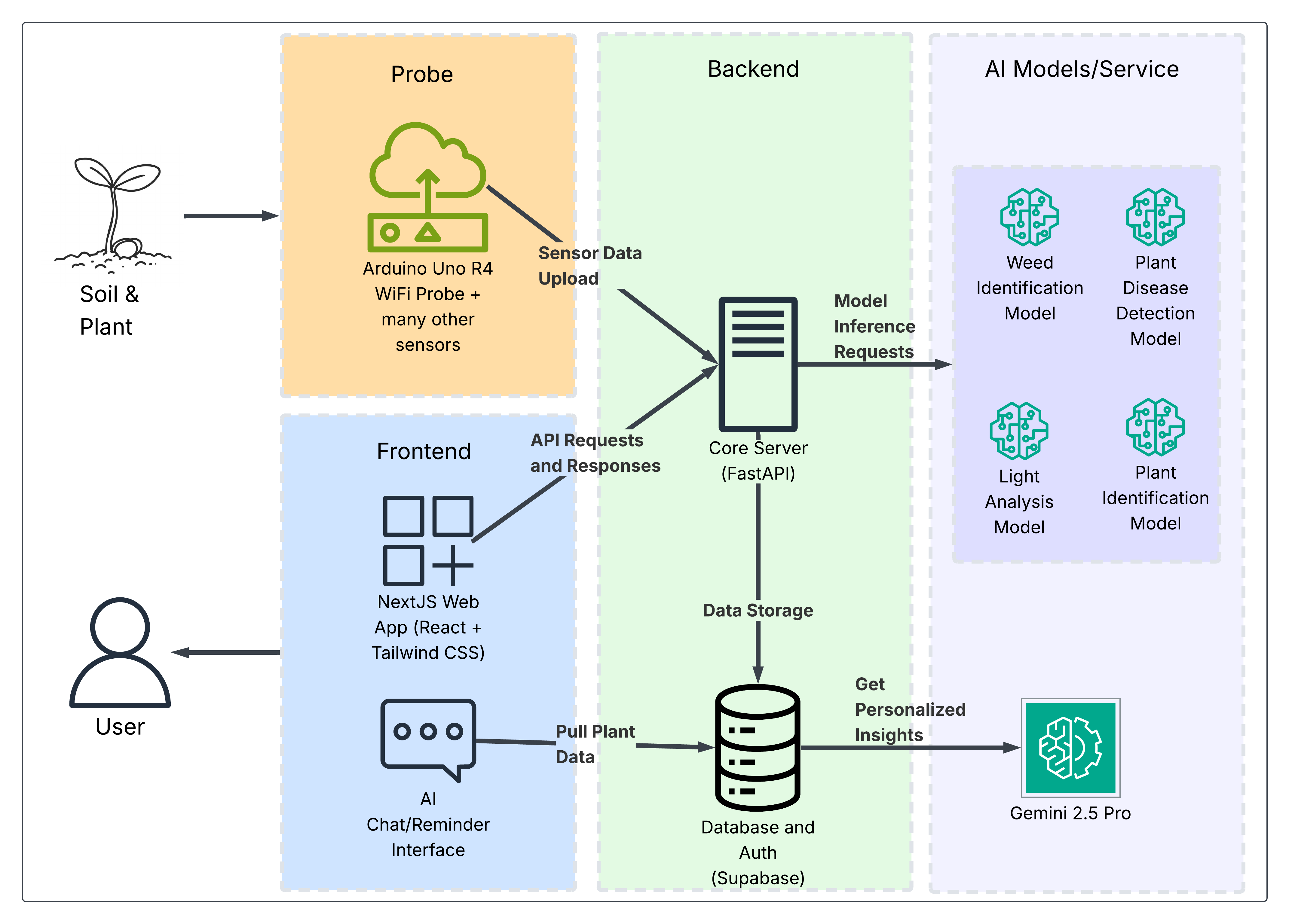

System Architecture

To handle the complexity of real-time sensor loops and multi-model AI inference, I designed a decoupled architecture that prioritizes vertical scalability and low-latency data propagation.

Figure 2: End-to-End Data Lifecycle—from Edge Capture to AI Reasoning.

The lifecycle begins with the Arduino Uno R4-based probe, which acts as our edge gateway. It performs local signal processing before transmitting telemetry via WiFi to our FastAPI backend. The backend orchestrates the data flow, persisting raw metrics in Supabase while simultaneously triggering redundant AI worker services for specialized tasks like weed identification and disease classification. Final insights are synthesized through Gemini 2.5 Pro using a RAG-based context injection pipeline.

Technical Implementation

This project evolved into a multidisciplinary effort. While my colleagues focused on the electromechanical design of the hardware probe, I architected the end-to-end software ecosystem.

- Stack Choice (NextJS & Tailwind): We needed a unified framework for SEO-friendly static landing pages and a highly interactive dashboard. NextJS’s hybrid rendering allowed for fast initial loads of the soil data visualizations.

- Inference & Backend (FastAPI): I chose FastAPI specifically for its asynchronous I/O capabilities and native Pydantic support. This was critical for handling concurrent model inference requests from the probe while ensuring strict data-type validation for sensor telemetry.

- Data Persistence (Supabase): By utilizing Supabase, we leveraged the power of a full PostgreSQL relational database with automated real-time listeners. This ensured that whenever a probe uploaded a sample, the user’s dashboard reflected the change instantly without polling.

- LLM Reasoning (Gemini 2.5 Pro): Rather than simple text generation, we implemented a form of Retrieval-Augmented Generation (RAG). By injecting real-time sensor data and regional climate metadata into the context window, Gemini provides actionable, sensor-aware agronomic advice rather than generic gardening tips.

Deep Dive: Machine Learning & AI

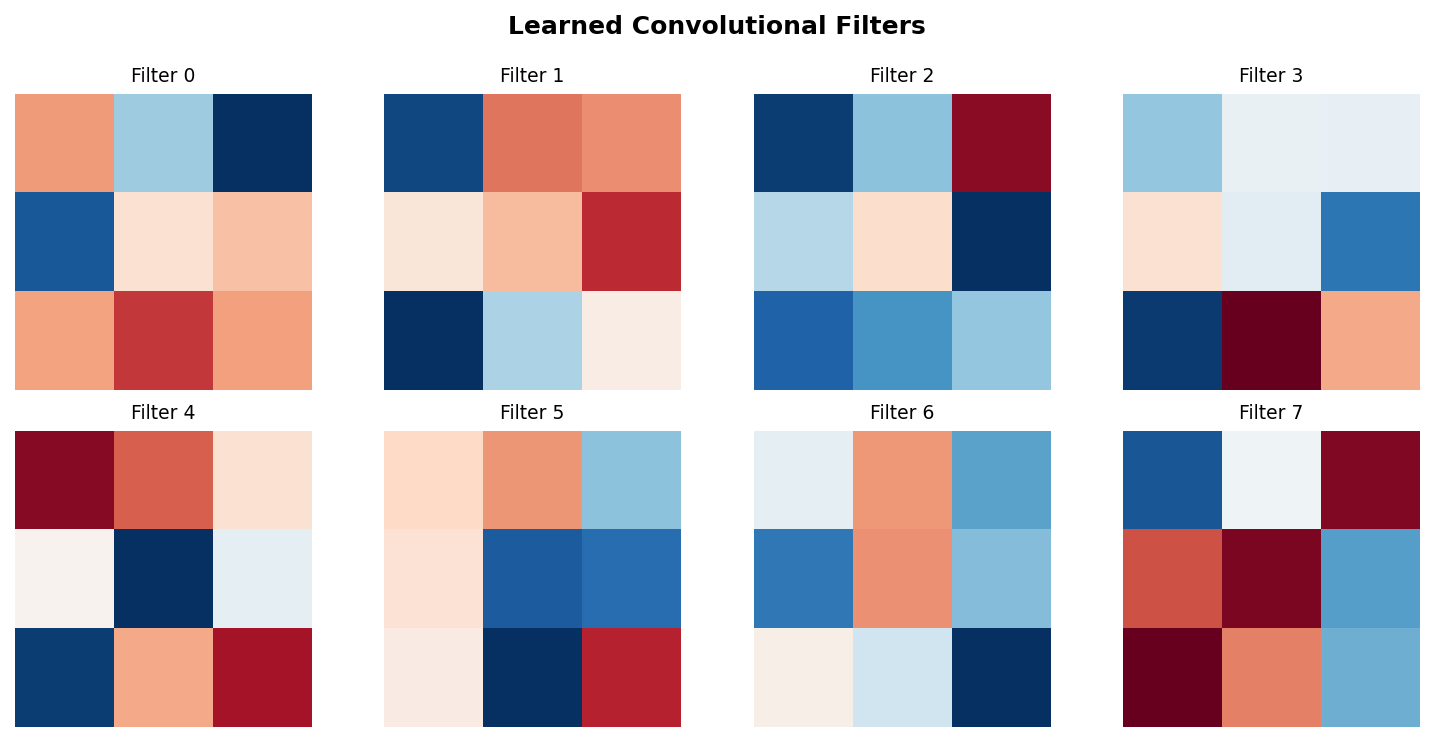

The core of Plantopia’s intelligence lies in its vision models. I learned to implement these through resources like DeepLearning.AI’s Convolutional Neural Networks course and various PyTorch tutorials.

Architectural Logic

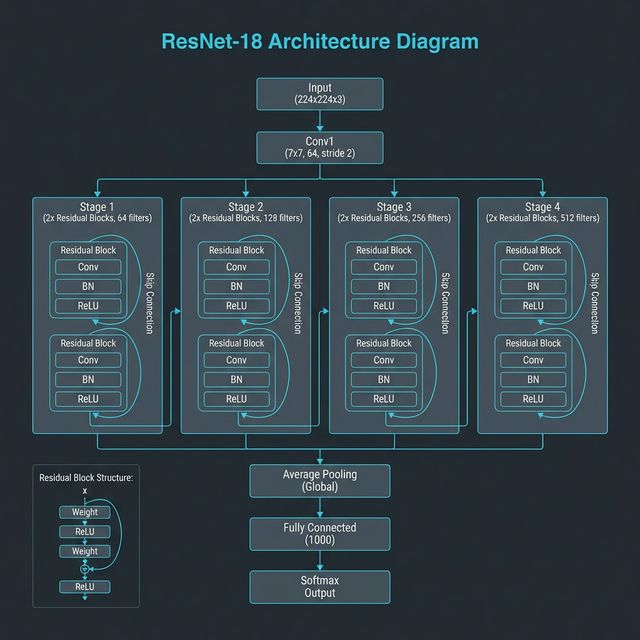

We utilize a combination of depthwise separable convolutions and residual learning to balance speed and accuracy.

Optimization Through Convolutional Arithmetic

To achieve high efficiency on edge devices, we decompose standard convolutions into Depthwise Separable components. This drastically reduces the number of operations (FLOPs) required per layer.

The theoretical cost reduction in computational complexity is defined by the ratio:

Cost Ratio=N1+Dk21Where N represents the number of output channels and Dk is the kernel spatial dimension. This optimization allows us to run inference on mid-range ARM-based CPUs with sub-50ms latency.

ResNet-18

ResNet-18 was our choice for high-fidelity classification. By utilizing residual blocks, we address the degradation problem where deeper networks often see accuracy saturation. The skip connections allow the gradient to flow through the network more easily, effectively learning the identity mapping H(x) = F(x) + x, which preserves features across layers and mitigates vanishing gradients during backpropagation.

Architectural Feature Maps

ResNet-18: Residual Mapping y=F(x)+x

MobileNetV3: Squeeze-and-Excitation Optimization

[!NOTE] Training involved optimizing for latency first, ensuring that diagnosis could happen in “signal-dead” zones common on rural farms.

Impact and Future Vision

This past summer, I had the opportunity to present this project to corporate leadership and gathered $10,000 in seed funding from investors including NCR and Assurant.

Figure 3: Securing capital for pilot expansion through corporate pitching.

Parallel to our funding efforts, I established a partnership with Old Rucker Farms. They have agreed to a localized pilot program, deploying our prototype probes and online platform to provide critical real-world feedback on crop health trends and sensor-driven alerts.

What’s Next?

In the future, I would like to scale Plantopia further, creating a dedicated mobile app and taking the product to market. Additionally, AI features such as weed and light identification will be added, and the existing inference pipeline will be improved through continual learning from real-world plant data and sensor-aware edge computing.

Multi-Task Learning for Bone X-Rays

The Publication

[Peer-Reviewed] This work was accepted into the International Bioimaging Conference (BIOIMAGING 2026), part of the 19th International Joint Conference on Biomedical Engineering Systems and Technologies (BIOSTEC) under INSTICC.

The Challenge

Musculoskeletal abnormalities are a leading cause of global disability, yet manual radiological analysis suffers from high inter-observer variability and high workload. Detectable markers are often subtle, requiring an expert eye and a transparent AI assistant that clinicians can trust.

The Approach

We introduced a high-performance deep learning framework leveraging Multi-Task Learning (MTL) and Class Activation Map (CAM) based ensembles. By training on the MURA dataset to simultaneously identify body parts and detect abnormalities, we “forced” the feature extractors to learn robust, medically relevant representations.

[!IMPORTANT] Our novel ensemble method uses inferential weighting based on CAM localization focus—effectively giving more “vote” to models that pinpoint anomalies with higher spatial confidence.

The Result

Our MTL-enhanced models achieved a 6.0% improvement in Cohen’s Kappa over baseline single-task models. The final ensemble further boosted performance by 4.6%, proving that “smarter” averaging is superior to simple majority voting. The project includes an interactive dashboard to visualize these results in real-time.

AI Algorithms from Scratch

Building the 'engines' of machine learning from first principles—no frameworks, just raw NumPy and calculus.

Most machine learning practitioners rely on high-level abstractions like PyTorch or TensorFlow. While efficient, these libraries often hide the underlying mechanics. This project is my attempt to build the “engines” from scratch: implementing the gradients, the backpropagation, and the decision logic from the ground up using nothing but NumPy.

The Philosophy of “No Magic”

The core idea was to stop treating .fit() and .predict() as black boxes. By rebuilding these algorithms, I had to confront the raw linear algebra and calculus that powers them.

This transition from “using” AI to “building” AI revealed things that generic tutorials often skip—like the numerical instability of certain activation functions or the extreme bookkeeping required for recurrent memory.

Technical Spotlight

Linear Regression

Finding the line that minimizes Mean Squared Error. The starting point for all gradient-based learning.

Logistic Regression

Classification via the Sigmoid squash. Maps raw linear outputs to probabilities between [0, 1].

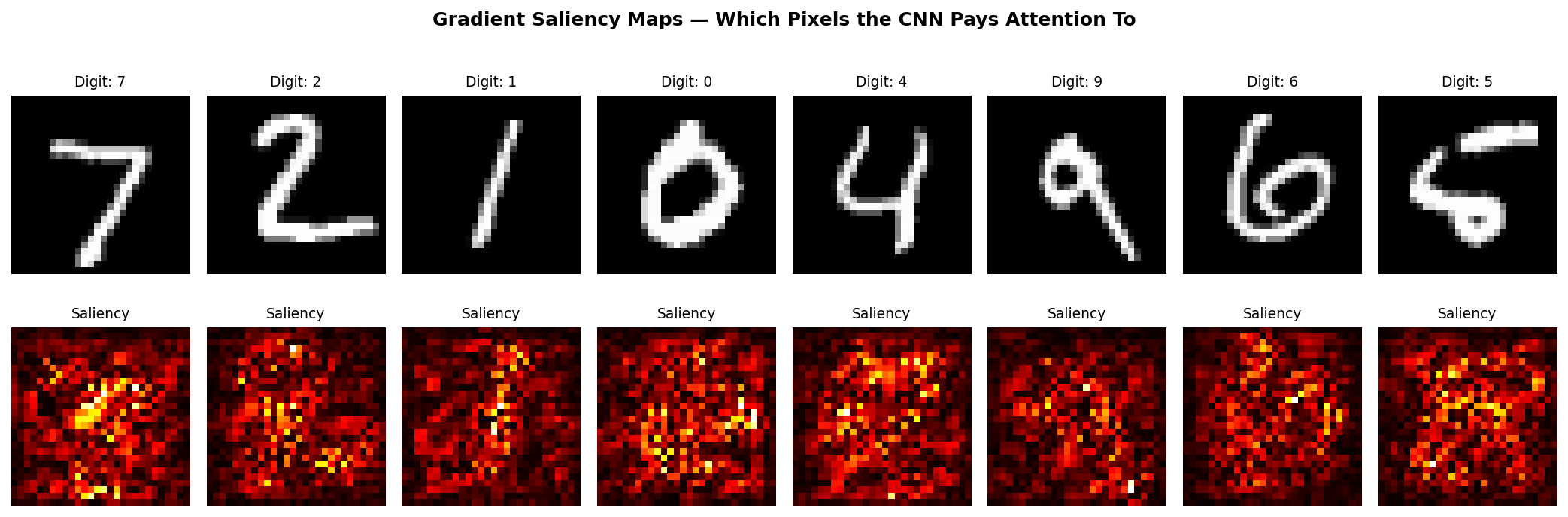

Visual Validation Layer

"Raw insights from the training pipeline, visualizing the internal state of the scratch-built AI."

The “Master Runner” System

To showcase these implementations, I built an interactive Master Runner script (run_all.py). It serves as an orchestration layer that allows users to pick any algorithm and watch it train in real-time.

Training these models on MNIST (reaching ~96% accuracy) and performing character-level language modeling on Hamlet proved that even without framework-level optimizations like CUDA, the fundamental math is enough to build meaningful intelligence.

Impact and Key Learnings

This project served as a rigorous “technical bible” for my AI journey. It enabled me to:

- Debug with Confidence: Understanding how gradients flow through layers makes debugging high-level frameworks like PyTorch intuitive rather than trial-and-error.

- Architect Optimization: Implementing algorithms like Adam and Gradient Clipping from scratch revealed the importance of numerical stability in deep architectures.

- High-Performance NumPy: Leveraging

einsumandstride_trickstaught me how to write highly vectorized Python code that approaches C-speed for specific tensor operations.

Liquid

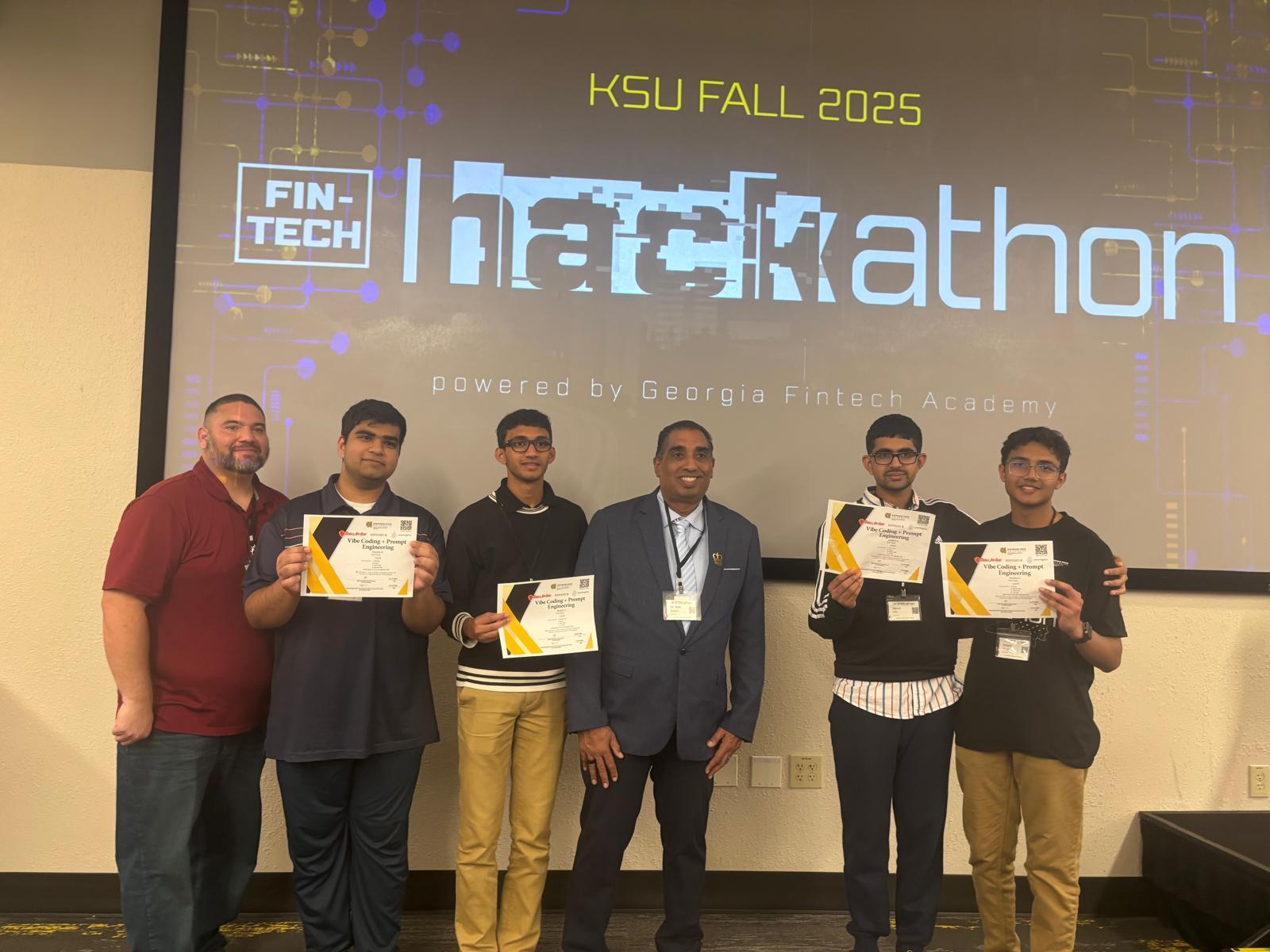

1st Place at the KSU Fintech Hackathon.

The Challenge

At the 2025 KSU Fintech Hackathon, we set out to build a streamlined fintech solution focused entirely on user accessibility and transparent transaction tracking. We entered as a high school team, competing against over 50 collegiate teams from institutions like Georgia Tech, Kennesaw State University, and Georgia State University.

The Methodology: Percolation Theory

Rather than just building a standard web app wrapper, we engineered a backend routing methodology using Percolation Theory.

This allowed us to model stable coin transactions across dynamic financial nodes effectively. By applying these models, we could mathematically determine the “best possible route” (lowest latency, highest probability of success) for a transaction to propagate through a shifting network of financial exchanges.

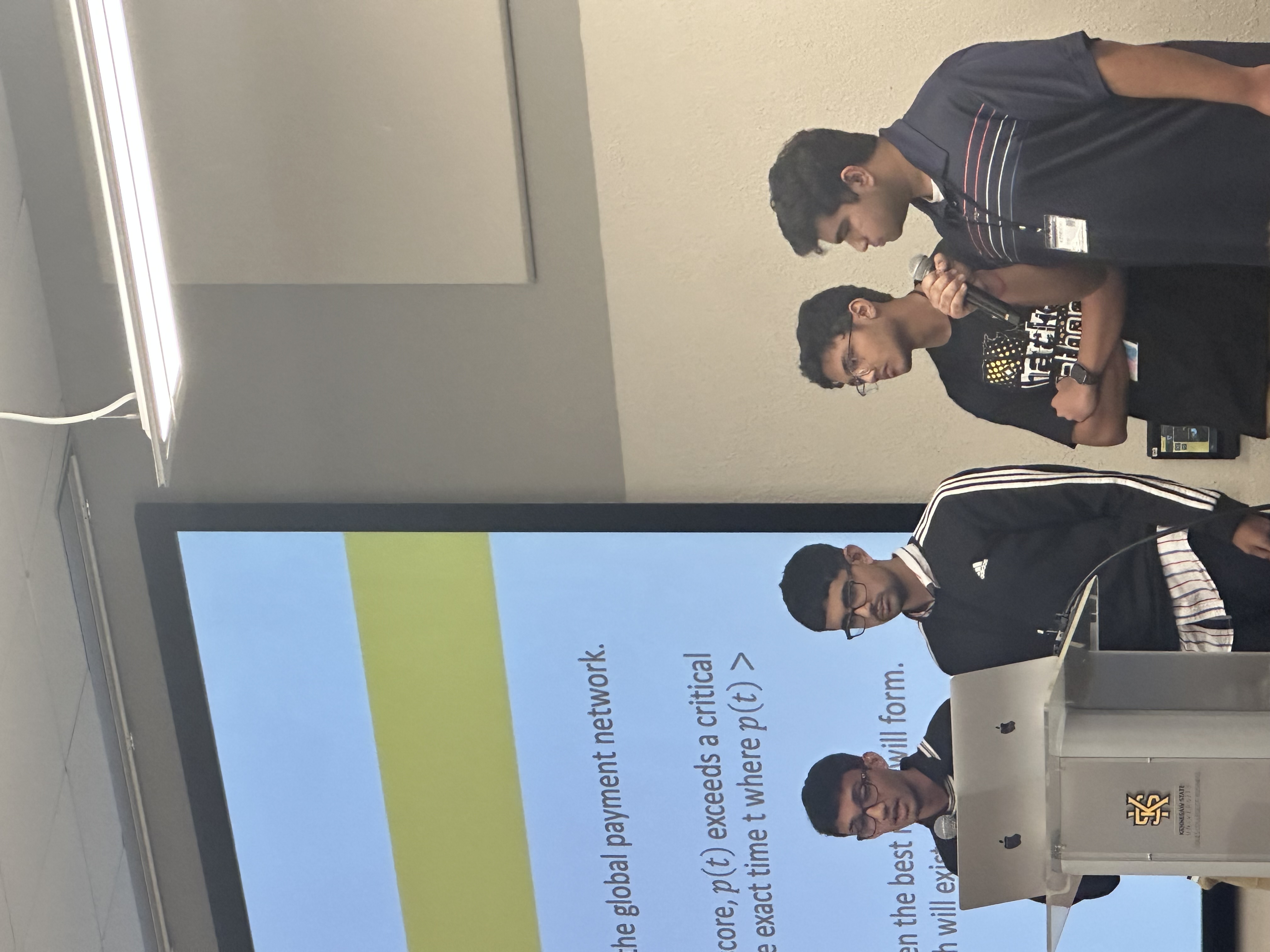

Figure 1: Presenting the rapidly prototyped Liquid architecture and the underlying mathematical models.

Proof of Concept

Here is our write-up of the proof of concept: View the Percolation Theory Document

The Outcome

Our unique approach to network routing combined with an accessible UI successfully bridged the gap between complex blockchain math and user-friendly fintech.

The judges awarded our team 1st place and the $4,000 grand prize, validating our technical approach and the viability of architecting conceptually advanced systems through rapid prototyping.

TEDxIA Talk

How AI is Changing What it Means to Be Smart.

Extensive drafting, iterative feedback, and stage practice to perfect the delivery.

Featured speaker selected to present at the TEDx event hosted at FCS Innovation Academy.

The Event

I was honored to be selected as a featured speaker for TEDxIA, held at FCS Innovation Academy. The journey to the stage encompassed a grueling but incredibly rewarding 4-month process of preparation, refinement, and practice.

The Message: How AI is Changing What it Means to Be Smart

As artificial intelligence models become increasingly capable of performing complex analytical and creative tasks, the traditional metrics we use to define “intelligence” are being challenged. My presentation explored:

Presenting at the TEDx event hosted at FCS Innovation Academy.

- The Evolution of Intelligence: How rote memorization and basic computation are being outsourced to machines.

- The New Paradigm of Smart: Shifting the focus toward critical thinking, emotional intelligence, and the ability to ask the right questions (prompt engineering and system design).

- Implications for Education: What this means for students today and how educational systems must adapt to foster uniquely human skills.

Delivering this message on the TEDx stage allowed me to share my passion for AI ethics and its societal impact with a broad and engaged audience.

GJSS Presentation (UGA)

Presenting research on the Impact of Hallucinations on Multi-Agent Systems.

The Symposium

I was invited to present my research at the Georgia Junior STEM Symposium (GJSS), hosted by the University of Georgia (UGA). This prestigious event gathers top student researchers from across the state to share their findings with academic and industry experts.

The Research: Hallucinations in Multi-Agent Systems

My presentation focused on a critical vulnerability in modern AI architectures: The impact of hallucinations on multi-agent systems. As developers increasingly string together multiple Large Language Models (LLMs) to perform complex workflows, a single hallucination by one agent can dramatically compound and derail the entire system’s objective.

I explored strategies for:

- Detecting initial generation errors before they propagate between agents.

- Implementing consensus mechanisms and self-correction loops within the agent swarm.

- Measuring the cascading failure rate in deeply chained agentic workflows.

Presentation Deck

Engaging with the audience at GJSS provided invaluable feedback and highlighted new avenues for ensuring the safety and reliability of autonomous AI systems.

Waypoint

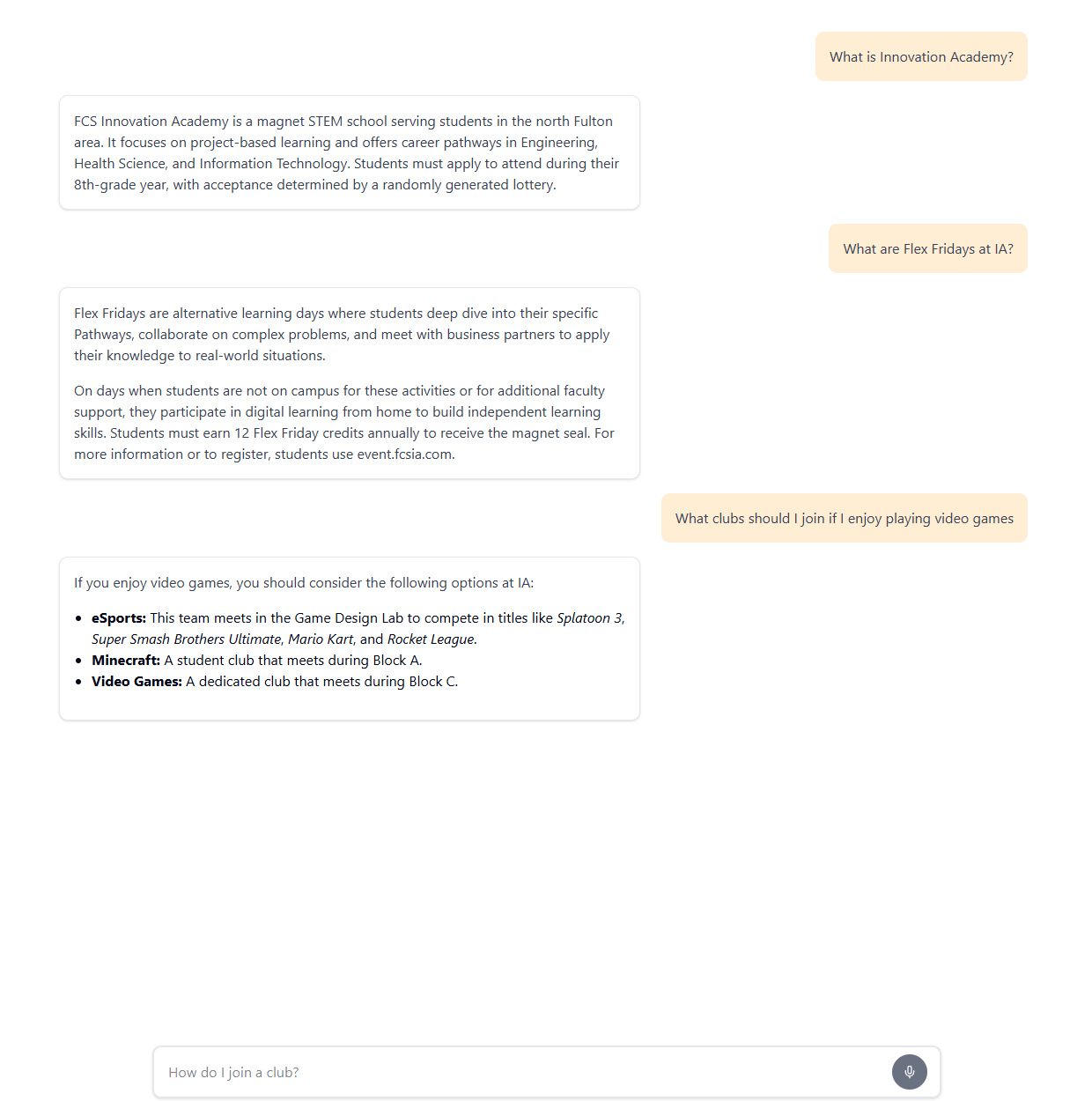

A schoolwide AI chatbot driving context-aware intelligence for FCS Innovation Academy.

The Problem

FCS Innovation Academy is a dynamic magnet school with a plethora of ongoing events, clubs, shifting rules, and generalized information that can often form a complex web for new and existing students alike. Students, parents, and teachers often faced difficulties navigating through various school pages, handbooks, and announcements just to find simple answers.

The core challenge was to build an interface that could instantaneously deliver context-aware information to the user in a highly intuitive, conversational manner, essentially serving as a centralized brain for the entire school ecosystem.

The Solution: Waypoint

Waypoint was conceived under the non-profit Phoenix Tech Solutions to mitigate these information silos. We built a real-time, smart chat interface that users can query to get accurate answers regarding the school environment. From finding a specific club sponsor to checking the upcoming timeline of events, Waypoint centralizes it all.

System Architecture & RAG Pipeline

The undeniable “brain” of Waypoint is powered by a custom Retrieval-Augmented Generation (RAG) pipeline. Instead of relying solely on the vast, but generalized knowledge of an LLM, we feed it hyper-specific school documents and structured data before it answers.

[ Document Ingestion ] -> [ Chunking ] -> [ Mistral Embeddings ] -> [ Vector Store ]

|

v

[ User Query ] -------------------------------------------------> [ Context Retrieval ]

|

v

[ Final Answer ] <------------------------------------------- [ Gemini 3 Flash Preview ]- Information Structuring: Data is ingested from

data.jsonand split into meaningful parts using Langchain’sCharacterTextSplitter. - Embeddings: We use Mistral AI (

@langchain/mistralai) to convert these chunks into numerical vectors. - Storage & Retrieval: A custom in-memory vector store (

SimpleMemoryVectorStore) handles fast cosine similarity searches. When a user queries Waypoint, the system retrieves the most relevant embedded chunks. - Generation: Relevancy context is beamed to Google’s Gemini 3 Flash Preview (via

@google/genai) to synthesize a perfectly accurate and grounded response.

Core Features

1. Smart Chat Interface

An intuitive, modern UI built with React 19, Radix UI, and styled fluidly with TailwindCSS 4 and Framer Motion. It supports real-time streaming of AI responses, preserving chat history and displaying it elegantly.

2. Staff & Club Directory

Users don’t just ask questions—they can explicitly look up teachers, read staff bios, and discover clubs efficiently without getting lost in generic school websites.

3. Timeline View

An interactive visual schedule plotting out upcoming events, milestones, and daily bell schedules.

4. Robust User Accounts

Seamless authentication and cloud persistence managed entirely via Supabase. We additionally integrated a custom IP-based request bucket to enforce rate limiting and prevent LLM API spam.

The Outcome & Current Status

What started as a rough prototype quickly captured the interest of school administrators. Waypoint is currently in a rapid collaborative phase with the Fulton County school district, aiming for a full-scale deployment to over 1,800 students, staff members, and thousands of prospective parents.

The seamless integration of a modern React frontend and a meticulously tuned AI backend proves that intelligent, accessible information systems are the future of educational infrastructure.